Hey Siri, Tell Me About Advanced Speech Recognition

The Solution:

Embedded Advanced Speech Processing

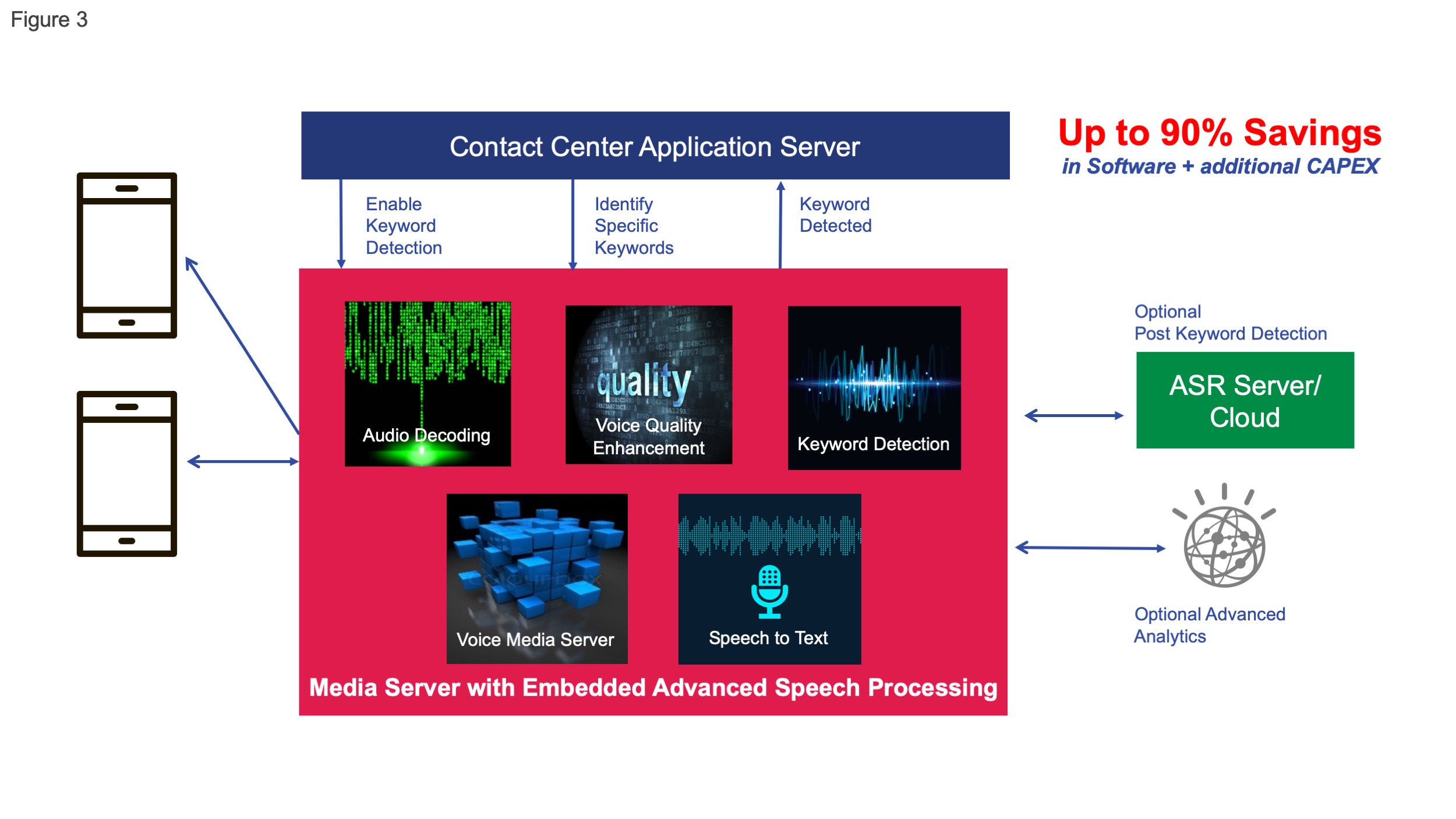

Service providers can now embed advanced speech processing into software-based media server technology using platforms that are already deployed in their networks. This approach eliminates the need to purchase one-size-fits-all speech recognition engines for every scenario or to support costly integration with other elements in the network.

The software-based media server runs on off-the-shelf servers and exposes a full set of media server capabilities as well as speech recognition through a consistent set of open APIs. It decouples speech recognition processing from any specific device, thereby extending the application reach. By leveraging open APIs, service providers and application developers can simplify integration with any application server and support a variety of speech engines. Each speech engine has different strengths, which provides service providers with choice. By using a combination of wake-word and natural language technology, CapEx and OpEx can be reduced by up to 90 percent. Furthermore, by using an in-call wake word to determine the action required, the solution limits the use of expensive full-natural-language processing platform to an as-needed basis, thereby significantly reducing the overall cost.

The embedded solution with voice quality enhancements also overcomes the challenges associated with various call quality conditions that previously required additional network elements to improve

the user experience.

Figure 3. Embedded advanced speech processing for in-call speech recognition capabilities

Real-World Use Cases for Advanced Speech Recognition

Communications service providers have the opportunity to support a wide range of new applications with in-call speech processing and media analytics embedded in their networks, thereby opening the ability to drive new revenues. During a phone call or conference call, the caller can invoke a wake word that launches the application. These applications include:

- Embedded “in-call” digital speech assistant (peer to peer, multi-party calls)

- Real-time speech transcription and speech analytics

- IoT-triggered conversational speech services and analytics

In the following examples, the wake word is “Hey Sophie.”

During a call with a contact center, John can interact with a voice-enabled bot by speaking to it with speech being processed by the media server. If a key word is detected such as “upgrade phone” or “technical issue,” the bot can send messages into the contact center and direct the call to the appropriate person. The agents themselves will have the wake word available to them, and they can say “Hey Sophie” to escalate the call by adding a manager or transferring a call to a tech expert, billing or other appropriate agent.